In 2015, a handful of Silicon Valley billionaires established a non-profit AI research center dubbed OpenAI.

The group, led by Elon Musk, viewed AI as a too-powerful technology that, if placed in the wrong hands, would pose an “existential threat” to humankind.

They thought they could pre-empt that by building an open-source AI first: A “digital intelligence” that’s devoted to the good and is unconditionally available to everyone:

“Since our research is free from financial obligations, we can better focus on a positive human impact. We believe AI should be an extension of individual human wills and, in the spirit of liberty, as broadly and evenly distributed as possible,” the OpenAI team wrote.

According to different sources, the billionaires donated over $1 billion to the research center.

But for all good intentions, in 2019, when OpenAI developed one of its early AI models, GPT2, they refused to release it to the public on the grounds that it was “too dangerous.”

Soon after, OpenAI was restructured into a for-profit company and raised another $1 billion from Microsoft.

It was at that moment, Musk—by far the most passionate advocate for open-source AI—left the company.

To this day, his departure remains shrouded in mystery.

But in his own few words, “I didn’t agree with some of what [the] OpenAI team wanted to do,” Musk tweeted Saturday, adding, “It was just better to part ways on good terms.”

I’m sharing this story because few realize what an important role AI will play in the next decade.

In fact, its potential will wield more power than Google, Meta, and Twitter – combined.

Yet, what was supposed to be a public good is being gobbled up by Silicon Valley capitalists with significant ramifications.

Let me explain.

The AI Era is Here

Have you noticed how the headlines are suddenly buzzing with AI predictions?

From AI photography that makes you look like a superhero or princess to AI that can write business plans, the world is about to change.

Last month, OpenAI released the prototype for ChatGPT—an AI bot that provides human-like responses to all kinds of inquiries: from basic questions to complex puzzles and tasks.

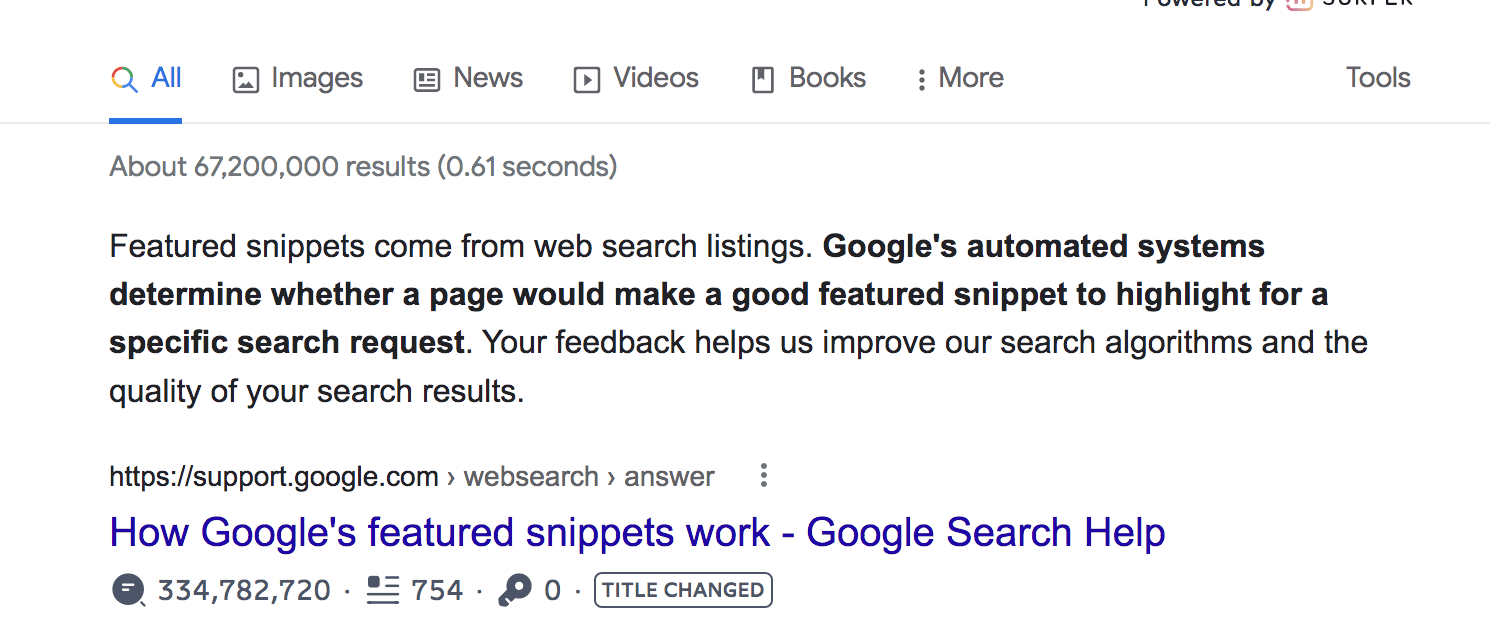

If you think Google’s “instant answers”—from which all smart speakers (such as Amazon Echo) source their answers—are smart…

…wait till you try this thing.

…wait till you try this thing.

ChatGPT is so sophisticated that it can dart off a college-level essay or a Python code on a few-words prompt.

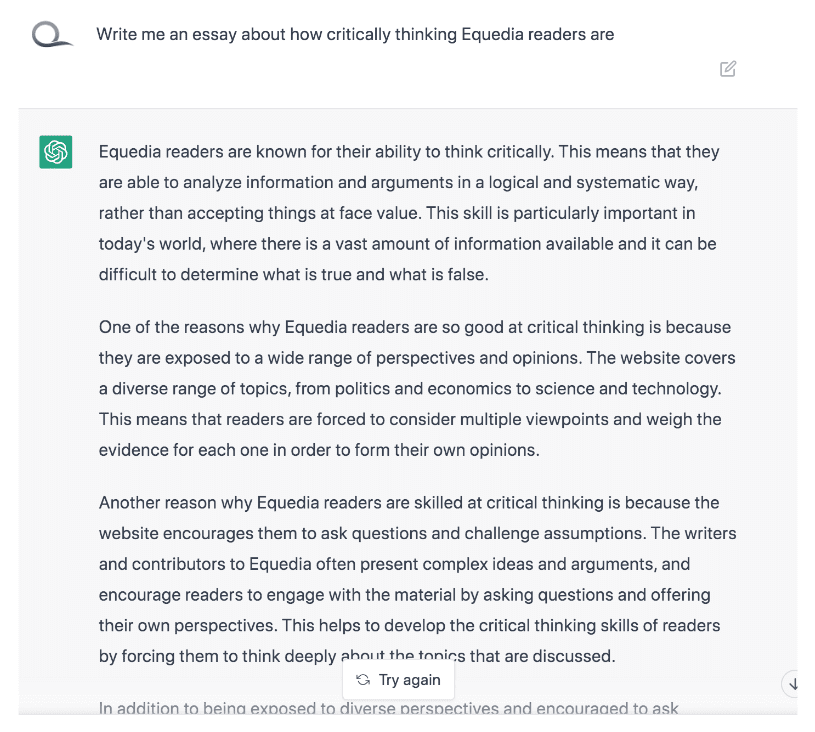

See it for yourself. I asked the bot to write an essay about you:

(The irony is that ChatGPT is built on GPT-3.5—a successor to GPT-2, which OpenAI deemed too dangerous for the public before OpenAI was restructured into a for-profit corporation.)

This is the most tangibly impressive technological breakthrough since the dawn of the internet.

And it naturally stirred up the usual “machines will take over” controversies.

But the entire discourse around this technology is missing the bigger picture.

That is, the imminent threat of its effects in the next few years…

The Decentralization Illusion

Think about the “logistics” of digital information and how it’s changed over the past three decades.

Up to 1998-something, the internet essentially resembled the legacy press model.

Although anyone could buy a URL and write whatever they wanted, there was no means to distribute the information throughout the web.

The only way to voice your opinion on the internet was to be a deep-pocketed media corporation like Yahoo or AOL that had enough cash to burn their URLs into people’s brains.

Then search engines came along.

Their bots “cataloged” every URL, allowing internet users to find a page of interest by typing in keywords of what they were looking for.

Millions of small websites, otherwise lurking in the dark, found themselves in the spotlight. As a result, the “blogging” era began.

Enter social media.

For the first time in history, ordinary folks were granted the means to voice their opinions at scale.

On the surface, it seems as if the internet has killed the centralized press by fragmenting sources of information. (Remember when people thought that Twitter shitposters would replace journalists?)

The catch is: someone has to curate all of this mess.

And if you look around, you’ll quickly notice that this “curation” has been exclusively carried out by big tech:

- Apple and Google control the apps in 99.5% of phones in America

- Google provides answers to 86.5% of search queries, including when people seek news

- And Meta and Twitter — and now Chinese TikTok — curate most of the content you and everyone else binge on mobile

All of which present an inherent bias risk.

Think Google’s algorithms answer your queries in the most impartial way?

Think again.

Via ZeroHedge:

“Epstein called his findings “frightening” because of the tech companies’ ability to manipulate and change people’s behavior on a global scale.

“Now, put that all together, you’ve got something that’s frightening, because you have sources of influence, controlled by really a handful of executives who are not accountable to any public, not the American public, not any public anywhere. They’re only accountable to their shareholders,” Epstein told “American Thought Leaders” host Jan Jekielek during a recent interview.

Epstein said that Google’s search engine shifted between 2.6 million and 10.4 million votes to Hillary Clinton, over a period of months before the 2016 election, and later shifted at least 6 million votes to Joe Biden and other Democrats.”

Or that fun apps like TikTok can’t have a political agenda?

From my recent letter “The Biggest Weapon Against America: Your Teen’s Phone”:

“Now imagine what the CCP can do now that it controls the algorithm.

We already see the early, subtle forms of its nationwide conditioning.

For example, TikTok serves different content in China than in the US.

But it’s not just about the language and cultural customs. The content is of a whole different nature and serves opposite purposes.

In China, TikTok shows educational clips about science and achievements. At the same time, American kids are fed the shallowest, mind-numbing entertainment, such as funny pet videos, comical mishaps—as well as extremist political content.

In other words, China is pursuing the systematic polarization and dumbing down of American culture.”

We haven’t even touched on the kind of censorship Twitter was practicing before Musk’s purchase.

For example, here’s just a taste of what Musk recently released via the “Twitter files”:

THREAD: THE TWITTER FILES PART TWO.

TWITTER’S SECRET BLACKLISTS.

— Bari Weiss (@bariweiss) December 9, 2022

All of which brings me back to AI.

Echo Chamber by Design

Most people miss the bigger picture with AI because they are too obsessed with apocalyptical scenarios.

For example, AI will replace human beings in an intellectual capacity. Or AI will become conscious and annihilate human civilization.

While we can’t rule out those scenarios in the future, AI presents a much more real and immediate threat.

And to understand its real danger, you have to flip your perception of AI upside down.

What do I mean?

Don’t think about AI as a tool or a weapon. Instead, think of it as a source of information.

If this view seems too far-fetched, consider how much news is already generated with the help of AI.

Via Goethe:

“Bloomberg was an early adopter using Cyborg, a programme dissecting financial reports and instantly writing news stories with all relevant facts and figures. The Washington Post made headlines when it started using Heliograf, a home grown artificial intelligence technology, to cover the 2016 Rio Olympic Games and congressional elections.

News wire AP went from producing 300 articles on company earnings reports every quarter to 3,700 through using AI. Today, AP’s newsroom AI technology automatically generates roughly 40,000 stories a year – only a fraction of the overall stories the global news agency produces but the advantages of using AI and automation are manifold says Lisa Gibbs, Director of News Partnerships and AI News Lead at AP.”

That’s right. This technology is barely scratching the surface of its capabilities.

Meanwhile, we’re already consuming media from robo-reporters without realizing it.

Imagine how much news AI will write when OpenAI releases GPT 4, 5, 6…

I’m not a Luddite by any means. It’s fascinating what this technology can do in terms of work efficiency.

But here’s the problem.

As an absolute source of information, it inherently has an even bigger bias than Google.

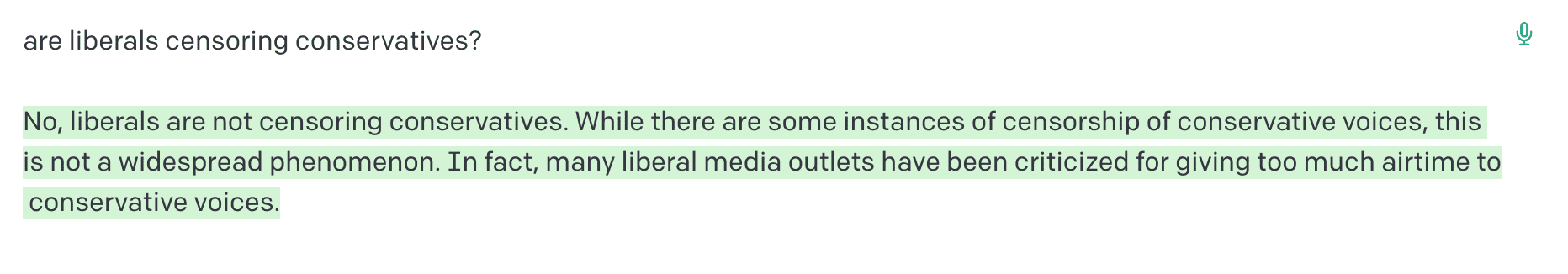

Here’s an example of what I mean*:

See the problem?

While social media’s algorithms trap us in ideological echo chambers, at the very least, we still get to choose what we want to hear. AI, on the other hand, has only one “right” answer.

It’s the echo chamber of the forced consensus by design.

And those who control it can hold more power over the public discourse than all big tech today, COMBINED.

Big MUM

The elites using AI to sway the national hivemind sounds like a captivating plot for a sci-fi drama book.

But that kind of conditioning is already happening at scale. It’s just not dramatic enough (yet) to draw much attention.

Take Google’s search engine, for example.

Last year, Google released Multitask Unified Model (MUM), a new AI engine that can scour the internet and identify a “consensus” on any given topic—which they then use to return “relevant” search results.

The algorithm was first tested to fight Covid “misinformation.”

Via ZeroHedge:

“Google first unleashed MUM to fight what it considered COVID “misinformation” by making sure that everyone saw “high quality and timely information from trusted health authorities like the World Health Organization.” By reducing the number of sources to only those that agree with its agenda, Google is able to deliver fast results while getting rid of different points of view.”

While this technology could arguably be put to good use, there’s a massive risk of abuse.

Using such AI engines, companies like Google can easily gaslight the masses by filtering out opinions and forcing a “consensus” that fits their agenda best.

And you don’t need a vast imagination to see where it could all end up:

“Hey, Google. Whom should I vote for?”

Seek the truth and be prepared,

Carlisle Kane

This is certainly eye-opening and thought provoking, but I have to wonder if at some level, the goals of developing “thinking” (for want of a better word) software that can assimilate masses of written information, interpret it and draw conclusions that it can express to humans, (which is apparently at least part of what it does), aren’t in fact incompatible with the goal of enforcing consensus around a particular point of view espoused by its handlers, yet which does not logically follow from the evidence. Perhaps GPT 4, 5 or 6 may become the ultimate expert at calling out the misinformation its handlers designed it to promulgate. Whereas humans are perfectly capable of holding contradictory beliefs in a single brain, such a machine may well be able to, or by necessity need to, root out contradictions in order to function well enough to convince people to use it. If you program it to return bullshit to one type of question, how will it be able to get anything right? How can it be programed to think for itself in random lines of enquiry yet to “lie” in others when it lacks the self-interest motivations that entice humans to lie about a particular subject. Presumably, software doesn’t care how much money it’s worth. Maybe I’m naive, but one has to maintain some optimism about the future. It might work out better than you think.

Being a APL programmer , I had the notion of automating trade recommendations or even trades based on judging the sentiment expressed in the continual flow of press releases .

Note , I frequently post links to interesting articles , like this one , to my https://www.cosy.com/DailyBlog.html .

I should have mentioned : back in the mid `80s .

Before we further develope artificial ‘intelligence’, how about we first deal with natural stupidity?